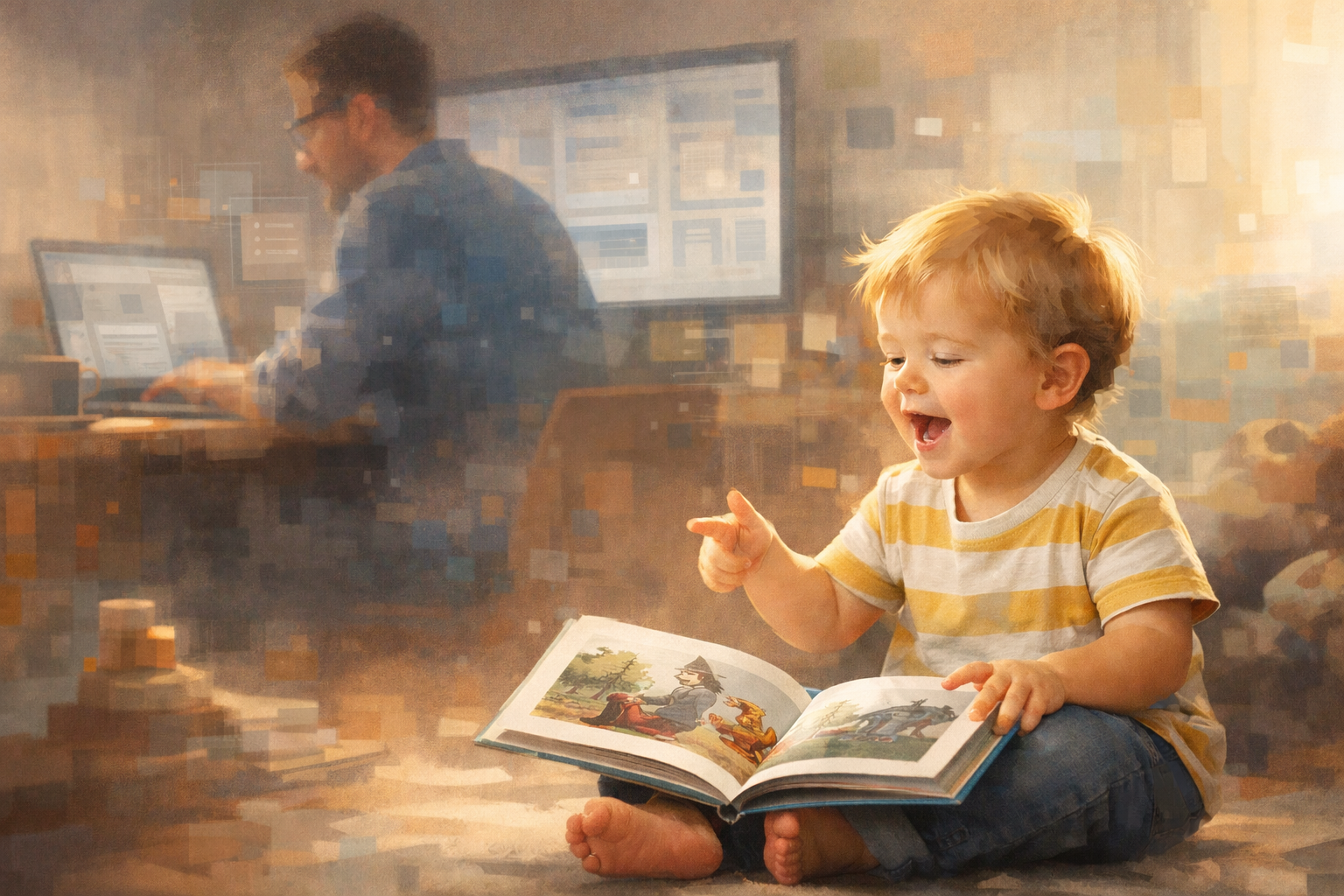

My 2-year-old recently started "reading" his books back to me.

He doesn't know the words. He just holds the book up, stares at the pictures, and narrates a completely made-up story with the conviction of someone who has done extensive research. The plot changes every time. The confidence never does.

I find this funnier than I probably should. Meanwhile, I was at my desk asking an AI to help me name layers in Figma.

So yes, I'm thinking a lot about the future of education. Mostly because I'm a senior product designer working in the AI space, and also because I'm the father of a 2-year-old who will grow up in a world where "knowing stuff" is no longer the scarce resource.

The thing that pushed this from background anxiety to "I should write this down" was Matt Shumer's essay, "Something Big Is Happening." If you read it, you know the vibe: early-internet energy, exponential curves, and a strong implication that many screen-based jobs are about to get weird faster than we're emotionally prepared for.

I don't want to write a fear piece. I want to write a "what do we do with this?" piece. Especially as a parent.

School was built for a different kind of scarcity

Traditional education (at its best) did two things well:

- Transferred knowledge efficiently

- Sorted people using standardized measures of that knowledge

AI changes the economics of both.

If an AI can generate a passable essay, solve a worksheet, explain a concept five ways, and role-play a tutor with endless patience, then the value of "producing the answer" collapses. That doesn't mean learning collapses. It means the evidence of learning needs to change.

In product terms: we've been measuring the output, not the understanding. AI is an output engine.

So the question becomes: what should education optimize for when answers are cheap?

What I'm seeing in my own AI workflows (and why it matters for kids)

In my day-to-day work, AI is not replacing "design." It's reshaping the tasks around it.

I use it for:

- First drafts of UX copy and alternatives I would not have written at 11:47pm

- Summarizing research notes and pulling out themes I can challenge

- Generating edge cases and "what could go wrong" lists

- Rubber-ducking flows, then immediately arguing with it (therapeutic)

- Turning messy thoughts into a structure I can actually critique

The pattern is consistent: AI is great at accelerating execution. It is not great at owning responsibility.

Someone still has to:

- Decide what matters

- Set goals

- Judge quality

- Verify reality

- Handle consequences

That "someone" is the human. For now. And even if you believe AI keeps climbing, society will still need people who can take responsibility for decisions in high-stakes contexts.

So education should double down on the skills that sit above execution.

A parent's hot take: "AI readiness" is not "teach every kid to code"

If you have a toddler, you already know the big battles are not algebra. They're:

- Sleep

- Emotions

- Routines

- Language

- "No, we don't lick the dog"

Those are not side quests. They are the main storyline.

Early development is compounding interest. The strongest preparation for an AI-heavy future is still:

- Language-rich interaction

- Secure relationships

- Play

- Self-regulation

- Curiosity

That's not sentimental. It's practical.

A child who can manage frustration, stay curious, and keep trying after failure has an advantage in any future. Including one filled with very persuasive machines competing for their attention.

What "AI-proof education" actually looks like

Not "ban AI." Not "replace teachers with chatbots." Not "every assignment becomes a prompt." More like this.

1. Teach problem framing, not just problem solving

When answers are abundant, leverage comes from asking better questions:

- What are we trying to accomplish?

- What constraints matter?

- What would success look like?

- What tradeoffs are we willing to accept?

This is the difference between "use a calculator" and "know what to calculate."

2. Make verification a core literacy

One of the most useful takeaways from Shumer's piece is the urgency. Even if you disagree with parts of the framing, the operational reality is the same: we are entering a world where confident, plausible output is everywhere.

Kids should learn:

- How to check claims

- How to triangulate sources

- How to run small tests

- How to spot when an AI is confidently wrong

In other words: epistemic hygiene. Clean thinking in a messy information environment.

3. Change assessment to value process and evidence

If a student can generate a polished essay in 30 seconds, essays do not disappear. They evolve.

Assessment starts to look like:

- Oral defenses ("talk me through your reasoning")

- Portfolios with iteration history

- Projects with real constraints and tradeoffs

- Collaboration with clear roles

- Evidence trails that show what was used, why, and what was rejected

We stop grading "the final artifact" and start grading "the thinking that produced it."

4. Treat AI as a tool, and ethics as a habit

Students will use AI. Adults will use AI. Teachers will use AI.

So we teach:

- What AI is (and what it is not)

- Bias, hallucinations, and limitations

- Privacy basics

- When AI use is appropriate

- What integrity looks like when tools are powerful

This should not be a one-off lecture. It should be practiced, like writing.

5. Keep the human stuff central, because it compounds

The long-term resilient skills are the ones that AI amplifies rather than replaces:

- Judgment

- Communication

- Empathy

- Leadership

- Systems thinking

- Learning how to learn

These are not "soft skills." They are the hard part when everything else gets faster.

The real risk: training kids to be compliant answer machines

This is my biggest fear as a parent.

AI will reward:

- Originality

- Agency

- Judgment

- Taste

- Integrity

- The ability to learn quickly and adapt

But many school systems still reward:

- Compliance

- Memorisation

- Low-variance answers

- Curiosity only when it fits the lesson plan

If we keep optimising for the old scoreboard, we will raise kids who are excellent at tasks AI can do, and undertrained for the things AI makes more valuable.

What I'm trying at home (imperfectly)

No perfect-parent cosplay here. Just a few principles I'm attempting to follow:

- Protect attention. Boredom is allowed. It's where play starts.

- Prioritise language. Read aloud. Narrate the world. Ask questions with more than one right answer.

- Model scepticism. When I use AI, I talk about checking it. Not worshipping it.

- Build routines. Not because routines are cute, but because self-regulation is future-proof.

Also, I try not to let my kid see me arguing with an AI about button labels. That conversation is for adults.

The hopeful ending (because I'm a parent and I need one)

When I look at my 2-year-old, I don't see "future worker in an AI economy."

I see a kid learning how to be a person.

And that's the point.

If AI makes knowledge cheap, then being human becomes more valuable, not less. Education should reflect that.

Not by resisting technology, but by designing learning around what compounds across every possible future.

Curiosity. Judgment. Integrity. Relationships. The ability to learn, unlearn, and relearn.

And maybe, if we're lucky, the ability to park a train on a book without launching a household incident report.